It has been a while since posting, but hopefully that will change given I’m now in a technical role again.

As an Architect/Engineer at Pure who previously worked at VMware on the vSAN product, I’m often asked about using Pure Storage with vSAN. This is a common ask for customers who want to take advantage of some of our awesome features like local snapshots, native replication, offload to NFS/Cloud, Safemode, and more.

As of right now, we don’t have any specific documentation on how to “add Pure Storage FlashArray to vSAN”, so I thought I’d put something together to cover the process at a high level. It really is pretty simple. There isn’t anything special about adding non-vSAN storage to vSAN nodes (or VxRail nodes for that matter), other than to say, follow the normal process of adding external storage to vSphere.

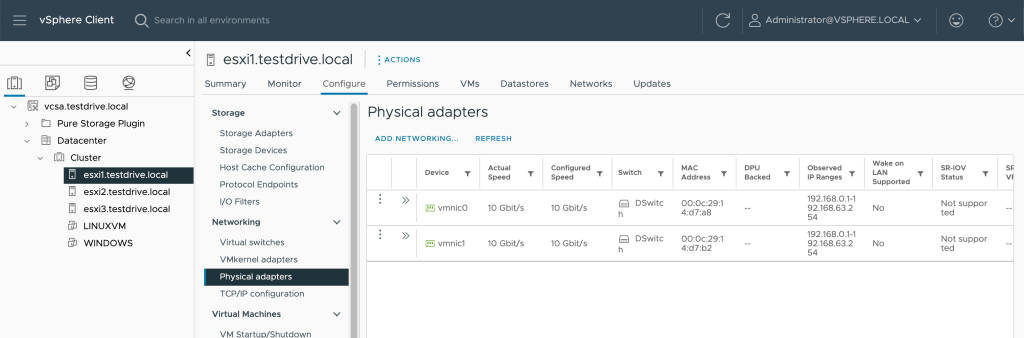

With that being said, I chose to go through this process in a fashion similar to customers with a dual-10G uplink configuration like that of some vSAN/VxRail configurations.

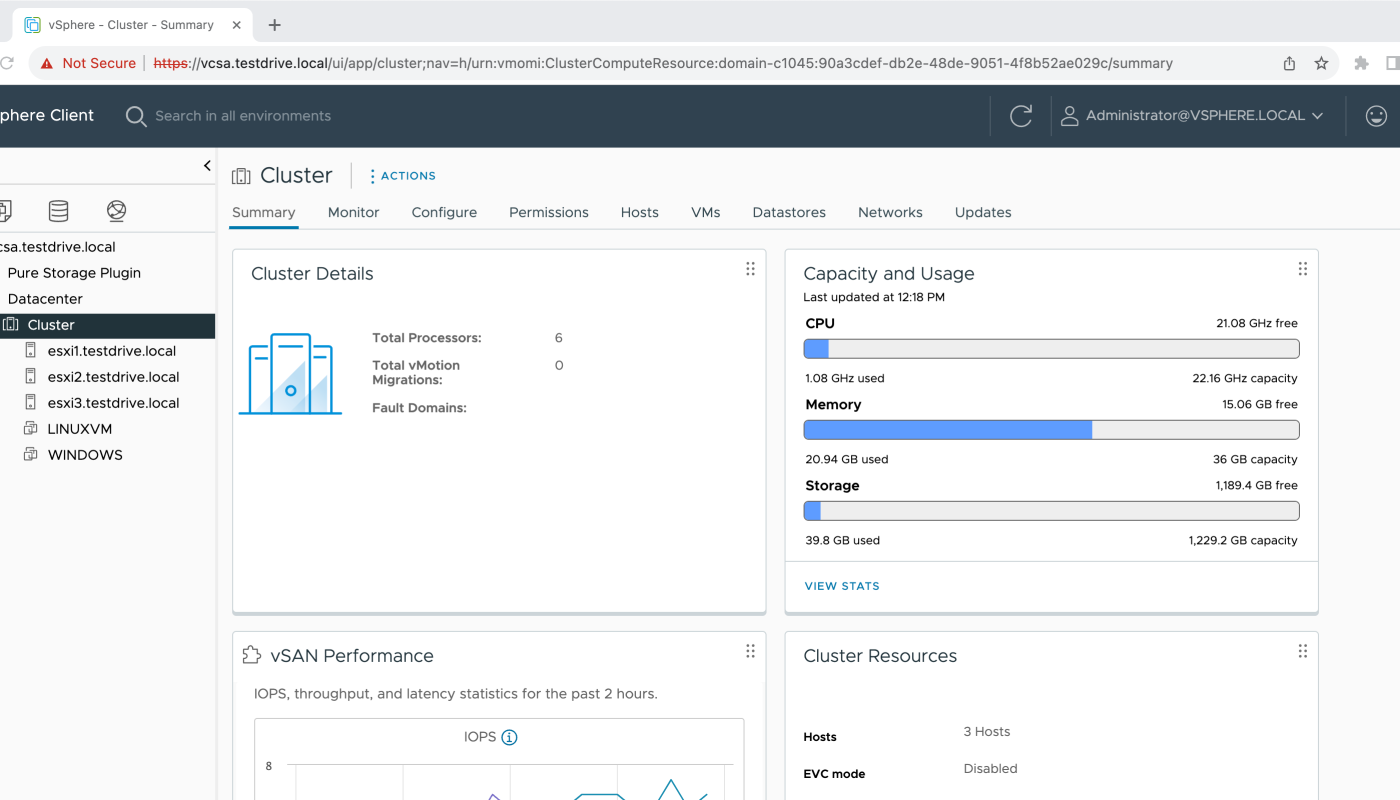

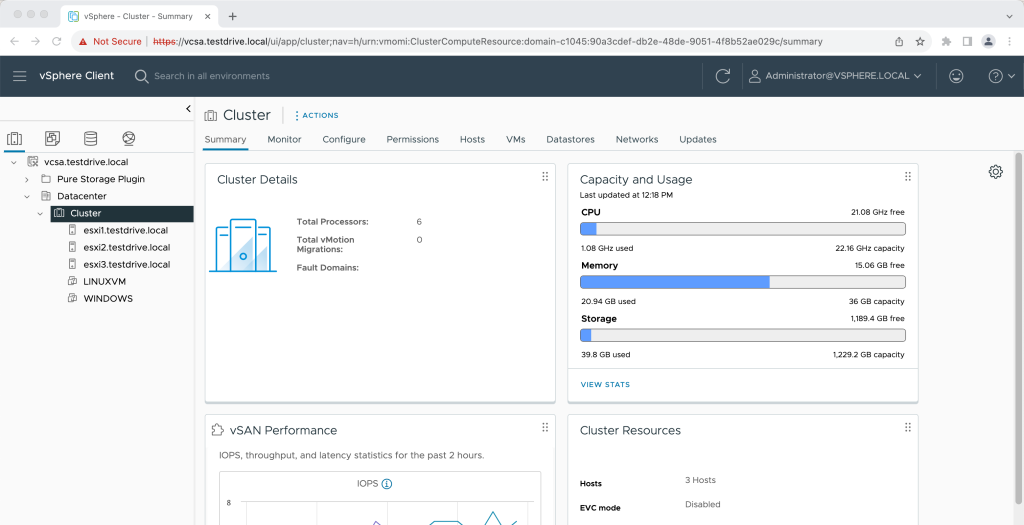

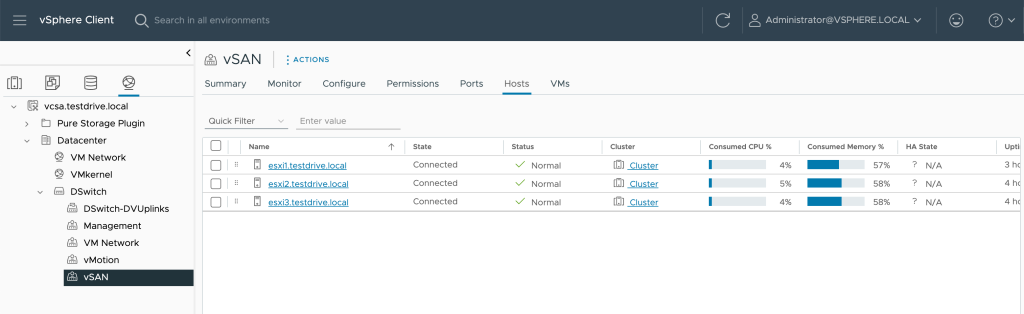

To illustrate the process, I configured a nested VMware vSphere 8.0 U2 vSAN environment.

My vSAN Connectivity Configuration

I configured the environment with 2 “physical” NICs to mimic what might be seen in a small VxRail/vSAN environment.

Like many vSAN/VxRail customers, I’m using a vSphere Distributed Switch to manage the uplinks and port groups.

vSAN Network Configuration Summary – 2 physical NICs included in a vSphere Distributed Switch with Management, VM traffic, vMotion, and vSAN port groups. Out of the box, vSAN, iSCSI, and NFS traffic are all going to have the same priority from a resource allocation perspective within the VDS.

vSAN Network Configuration Summary – 2 physical NICs included in a vSphere Distributed Switch with Management, VM traffic, vMotion, and vSAN port groups. Out of the box, vSAN, iSCSI, and NFS traffic are all going to have the same priority from a resource allocation perspective within the VDS.

Adding Storage to vSAN/VxRail Nodes

There are different tasks to attach external storage to vSphere, depending on things like the protocol, type of storage being used, access lists/etc.

For iSCSI storage, with FlashArray, the basic process is essentially:

- Configure the iSCSI initiators on vSphere (Pure recommends DelayedAck = False and LoginTimeout = 30) and we typically use Dynamic targets to populate the controller uplinks with iSCSI traffic.

- Configure the vSphere Hosts in FlashArray by their IQN

- Configure the vSphere Hosts in a Cluster as part of a Host Group in FlashArray

- Create one or more volumes and assign it/them to the Host Group

- Create one or more datastores from the vSphere Client using the native workflow.

Fibre Channel storage is essentially the same as Steps 2-5 above, but with host WWNs rather than IQNs.

For vVols storage, we’ll first want to have either iSCSI or Fibre Channel connectivity in place and then:

- Register the VASA storage provider from FlashArray

- Ensure a Protocol Endpoint is present on FlashArray and attach it to the vSphere Host Group

- Create a vVol datastore using the vSphere Client native workflow.

For NFS storage, with FlashArray, the basic process is essentially:

- Configure FlashArray for File Services and create one or more file systems for use with NFS

- Configure a FlashArray File System export policy to include the VMkernel addresses of the hosts mounting NFS as a datastore

- Assign the export policy and quota policy to the file system presented from FlashArray

- Mount the NFS export(s) from FlashArray to vSphere as a datastore using the vSphere Client native workflow.

Pretty straightforward process, regardless of whether vSAN, VxRail, or vanilla vSphere is used.

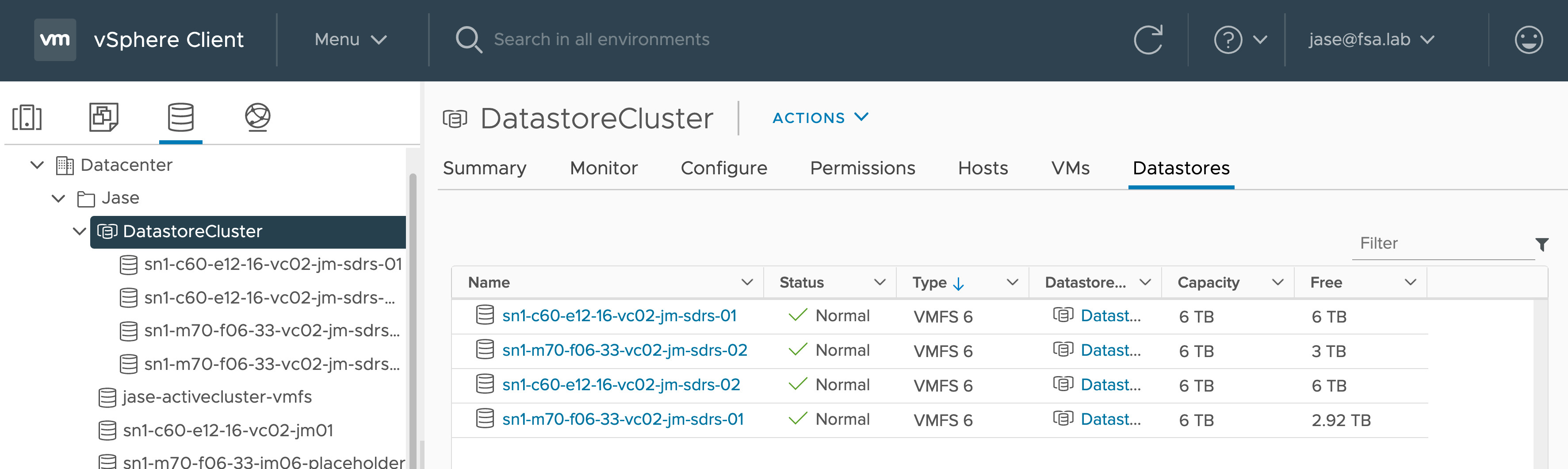

Rather than going through these steps manually, I’m going to use the Pure Storage Plugin for VMware vSphere to accomplish these tasks.

Install the Pure Storage Plugin for VMware vSphere

Installing the Pure Storage Plugin for VMware vSphere will make things significantly easier to add FlashArray based datastores to your VMware environment.

Pure customers can find complete documentation on how to download and install the Pure Storage Plugin here.

Essentially, the process is:

- Download the OVA (or import directly into vSphere)

- Configure the OVA to install the plugin (or choose none & perform the offline installation for dark sites)

- Register one or more FlashArray’s for use by vSphere

Next Steps

In Part 2 I will cover how to add iSCSI to the vSAN Cluster with the latest (5.3.4) plugin so it can be used to add one or more VMFS datastores.