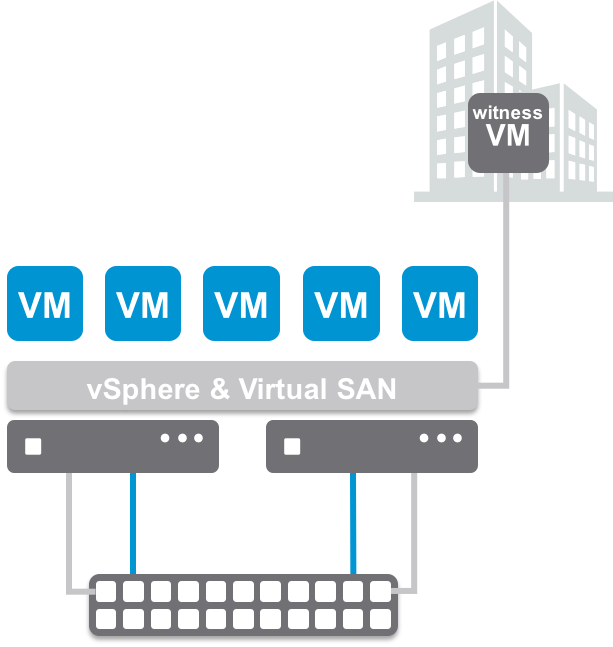

I was working with a customer last week, going over the configuration, setup, and requirements of Virtual SAN 6.1 when deploying a 2 node configuration. “Technically” this is a 2 node stretched cluster, comprised by two data nodes and a witness. Really a 1+1+1 configuration.

I was working with a customer last week, going over the configuration, setup, and requirements of Virtual SAN 6.1 when deploying a 2 node configuration. “Technically” this is a 2 node stretched cluster, comprised by two data nodes and a witness. Really a 1+1+1 configuration.

One of the reasons for the call, was some confusion about the setup, which is fortunately documented in the Virtual SAN 6.1 Stretched Cluster Guide. Cormac Hogan created the initial content, and I took care of a few updates, as well as adding some additional content specific to 2 node configurations, which are common in Remote Office/Branch Office type deployments.

I pointed the customer to the DOM Owner Force Warm Cache setting in the Stretched Cluster guide.

They mentioned that the setting was already 0 for their configuration. I logged into a stretched cluster lab environment, and noticed that it was also 0. I then logged into a traditional Virtual SAN configuration and yet again, DOM Owner Force Warm Cache was 0. Hmm. This is where I started to scratch my head.

I asked Cormac about the setting, as he is the original author of the Stretched Cluster Guide. Maybe we’ve got a bug? After a few back and forth conversations with engineering, we were told this was correct. What’s missing?

The setting DOM Owner Force Warm Cache is actually irrelevant to traditional Virtual SAN clusters. It comes into effect when stretched cluster configurations are used.

When DOM Owner Force Warm Cache setting is True (1), it will force reads across all mirrors to most effectively use cache space. This means reads would occur across both sites in a stretched cluster config.

When it is False (0) as my customer observed, site locality is in effect and reads are only occurring on the site the VM resides on.

In short, DOM Owner Force Warm Cache:

- Doesn’t apply to traditional VSAN clusters

- Stretched Cluster configs with acceptable latency & site locality enabled – Default 0 (False)

- 2 Node (typically low very low latency) – Modify 1 (True)

Not only does this help in the event of a VM moving across hosts, which would require cache to be rewarmed, but it also allows reads to occur across both mirrors, distributing the load more evenly across both hosts. If you’ve got a 2 node Virtual SAN cluster, it might be advantageous to enable this setting.

Again, to check the status, run the following command:

esxcfg-advcfg -g /VSAN/DOMOwnerForceWarmCache

To set it for 2 Node clusters:

esxcfg-advcfg -s 1 /VSAN/DOMOwnerForceWarmCache

Looks like I need to update the stretched cluster guide.